Django, SQLite and writes

February 2012

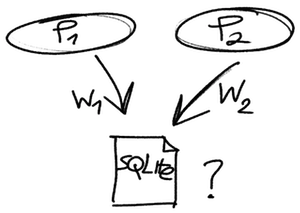

There seems to be some confusion about whether the SQLite embedded database has transactions and how it handles simultaneous writes from multiple processes.

- SQLite does support transactions. It has proper

ACIDfunctionality. - Multiple simultaneous writes are not supported. A write operation locks the whole database file for the duration of the transaction. Other write operations are put into waiting queue with a timeout.

- Simultaneous reads can proceed even with an active write transaction.

Let's perform a simple test with read and write operations in Django and SQLite:

# settings.py

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.sqlite3',

'NAME': '/home/tomi/djangotest.sqlite',

'USER': '', # Not used with sqlite3.

'PASSWORD': '', # Not used with sqlite3.

'HOST': '', # Not used with sqlite3.

'PORT': '', # Not used with sqlite3.

# for sqlite write lock timeout

'OPTIONS': {

'timeout': 5,

}

}

}

# urls.py

urlpatterns = patterns('',

#...

url(r'testread$', 'myapp.views.testread'),

url(r'testwrite$', 'myapp.views.testwrite'),

url(r'testwritelong$', 'myapp.views.testwritelong'),

#...

# models.py

class Dummy(models.Model):

created = models.DateTimeField(auto_now_add=True)

title = models.CharField(max_length=200)

# views.py

from django.db import transaction

from myapp.models import Dummy

import time

def testread(request):

# read dummy objects

dummylist = Dummy.objects.all()

return HttpResponse("test read")

def testwrite(request):

# create a dummy object, short transaction

c = Dummy()

c.title = "t1"

c.save()

return HttpResponse("test write")

@transaction.commit_on_success

def testwritelong(request):

# this code executes inside a transaction for 10s

_debug("testlongwrite")

c = Dummy()

c.title = "t2"

c.save()

time.sleep(10)

_debug("testlongwrite 2")

return HttpResponse("test long write")

Now run the server in an environment with multiple request processes. The

Django

development server "python manage.py runserver" won't do because it only runs a single process. I'm using uWSGI for my projects.

Launch a browser and open three tabs to urls: "localhost:8000/testread", "localhost:8000/testwrite" and "localhost:8000/testwritelong".

A normal Django model write operation is quick. Each model.save()

is wrapped inside a short-lived transaction. Database locking is thus not a

big problem. To test how the database gets locked during a long write

transaction, invoke 'testwritelong' and then simultaneously another

'testwrite'. As you can see, the second write has to wait until the first

write completes. After 5 seconds, the timeout will expire and Django throws a

"Database is locked"

error. 'testread' works

fine even with a long 'testwritelong'. You can set a longer wait timeout in

settings.py.

Django and SQLite work fine in small web projects that run in a single machine, and no data gets lost with moderate write activity and even with longer transactions. However, when you run multiple servers, use a server-based database, as SQLite may have trouble when run over network filesystem.

Back